[dropcap style=”font-size:100px; color:#992211;”]S[/dropcap]omeone is going to have to stop these damned boss’s pet scientists from coming up with yet more ways of stopping us slacking.

Pattern-recognition software to figure out if someone is faking pain. Just one more tool for The Man to squeeze more vital force out of we beleaguered saps feeding his insatiable hunger for money, power and OUR VERY SOULS.

Might be useful for footie referees though….

A joint study by researchers at the University of California San Diego and the University of Toronto has found that a computer system spots real or faked expressions of pain more accurately than people can.

The work, titled “Automatic Decoding of Deceptive Pain Expressions,” is published in the latest issue of Current Biology.

Distinctive dynamic features of facial expressions

“The computer system managed to detect distinctive dynamic features of facial expressions that people missed,” said Marian Bartlett, research professor at UC San Diego’s Institute for Neural Computation and lead author of the study. “Human observers just aren’t very good at telling real from faked expressions of pain.”

Senior author Kang Lee, professor at the Dr. Eric Jackman Institute of Child Study at the University of Toronto, said “humans can simulate facial expressions and fake emotions well enough to deceive most observers. The computer’s pattern-recognition abilities prove better at telling whether pain is real or faked.”

The research team found that humans could not discriminate real from faked expressions of pain better than random chance – and, even after training, only improved accuracy to a modest 55 percent. The computer system attains an 85 percent accuracy.

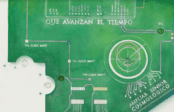

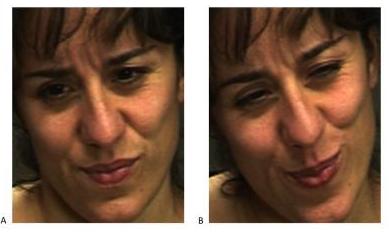

Which expression do you think shows real pain? Attempts to fake expressions of pain typically involve the same facial muscles that are contracted during real pain. There is no telltale facial muscle whose presence or absence would indicate real or faked pain. The difference is in the dynamics. Human observers are at chance for telling them apart. The machine learning and computer vision system described by Bartlett et al. detects faked pain significantly better than humans. The system detects distinctive dynamic features of expression missed by humans. Spontaneous and deliberate facial movement is controlled by distinct motor pathways that differ in their dynamics. By revealing the dynamics of facial action through machine vision systems, the approach in Bartlett et al has the potential to elucidate behavioral fingerprints of neural control systems involved in emotional signaling. The real expression of pain is image B on the right.

Which expression do you think shows real pain? Attempts to fake expressions of pain typically involve the same facial muscles that are contracted during real pain. There is no telltale facial muscle whose presence or absence would indicate real or faked pain. The difference is in the dynamics. Human observers are at chance for telling them apart. The machine learning and computer vision system described by Bartlett et al. detects faked pain significantly better than humans. The system detects distinctive dynamic features of expression missed by humans. Spontaneous and deliberate facial movement is controlled by distinct motor pathways that differ in their dynamics. By revealing the dynamics of facial action through machine vision systems, the approach in Bartlett et al has the potential to elucidate behavioral fingerprints of neural control systems involved in emotional signaling. The real expression of pain is image B on the right.

People can simulate emotion

“In highly social species such as humans,” said Lee, “faces have evolved to convey rich information, including expressions of emotion and pain. And, because of the way our brains are built, people can simulate emotions they’re not actually experiencing – so successfully that they fool other people. The computer is much better at spotting the subtle differences between involuntary and voluntary facial movements.”

“By revealing the dynamics of facial action through machine vision systems,” said Bartlett, “our approach has the potential to elucidate ‘behavioral fingerprints’ of the neural-control systems involved in emotional signaling.”

Fakers’ mouths open with less variation

The single most predictive feature of falsified expressions, the study shows, is the mouth, and how and when it opens. Fakers’ mouths open with less variation and too regularly.

“Further investigations,” said the researchers, “will explore whether over-regularity is a general feature of fake expressions.”

In addition to detecting pain malingering, the computer-vision system might be used to detect other real-world deceptive actions in the realms of homeland security, psychopathology, job screening, medicine, and law, said Bartlett.

“As with causes of pain, these scenarios also generate strong emotions, along with attempts to minimize, mask, and fake such emotions, which may involve ‘dual control’ of the face,” she said. “In addition, our computer-vision system can be applied to detect states in which the human face may provide important clues as to health, physiology, emotion, or thought, such as drivers’ expressions of sleepiness, students’ expressions of attention and comprehension of lectures, or responses to treatment of affective disorders.”

Source: University of California – San Diego

Photo: Kang Lee, Marian Bartlett

Some of the news that we find inspiring, diverting, wrong or so very right.